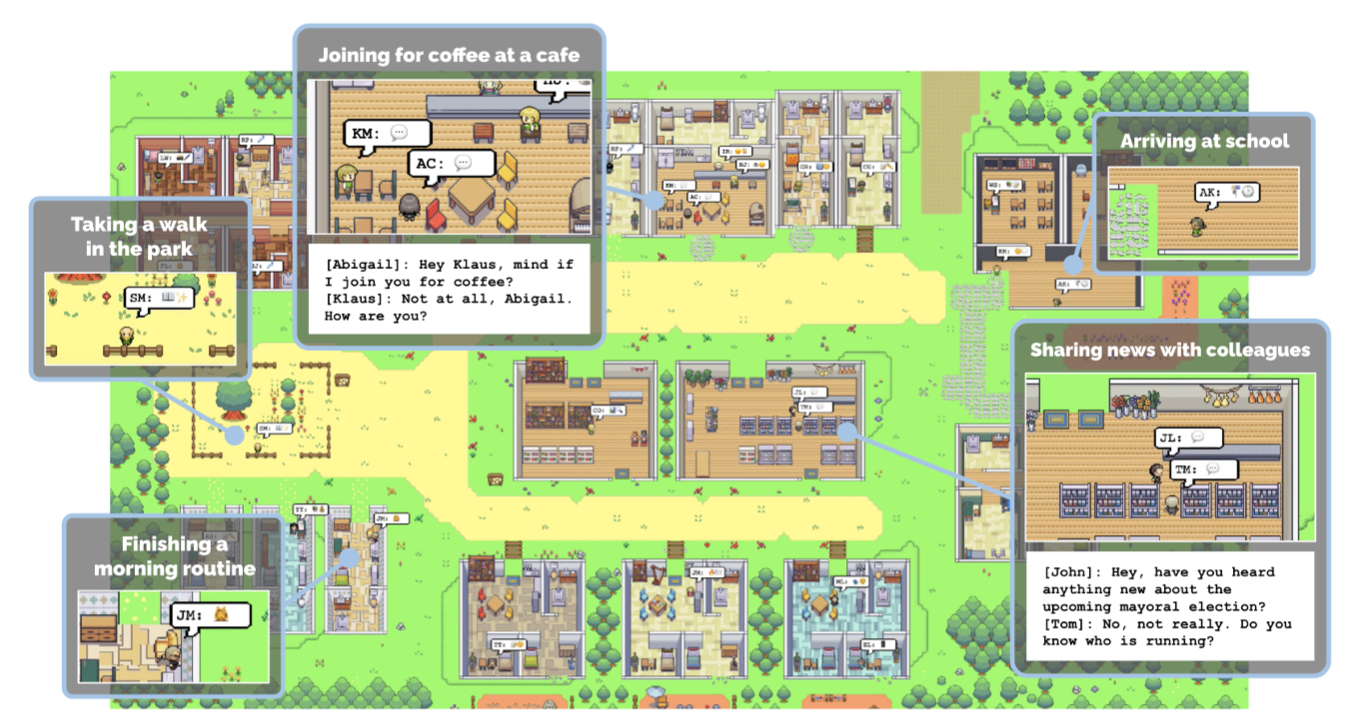

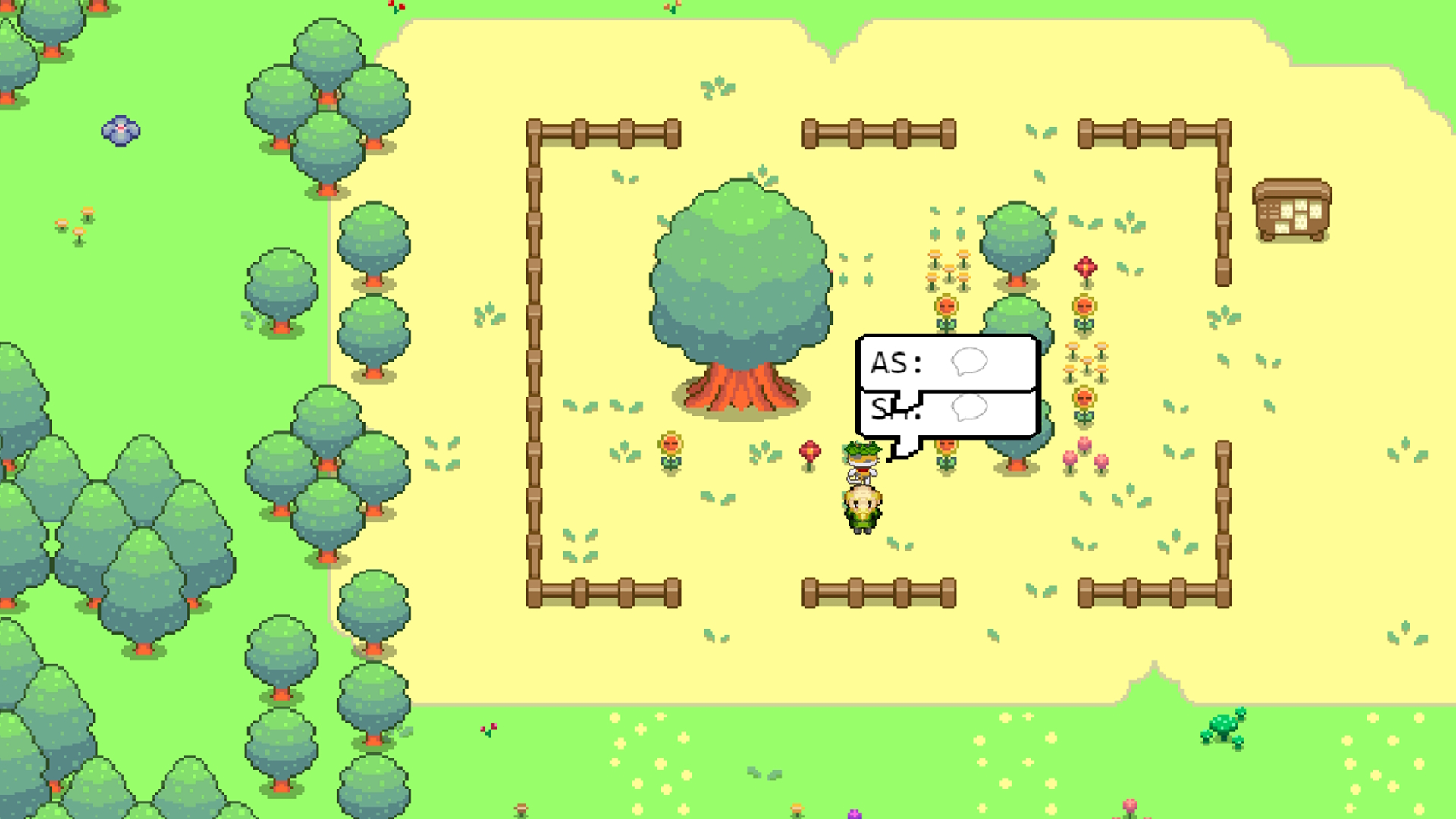

Final week, a few us had been briefly captivated by the simulated lives of “generative brokers” created by researchers from Stanford and Google. Led by PhD pupil Joon Sung Park (opens in new tab), the analysis crew populated a pixel artwork world with 25 NPCs whose actions had been guided by ChatGPT and an “agent structure that shops, synthesizes, and applies related reminiscences to generate plausible habits.” The end result was each mundane and compelling.

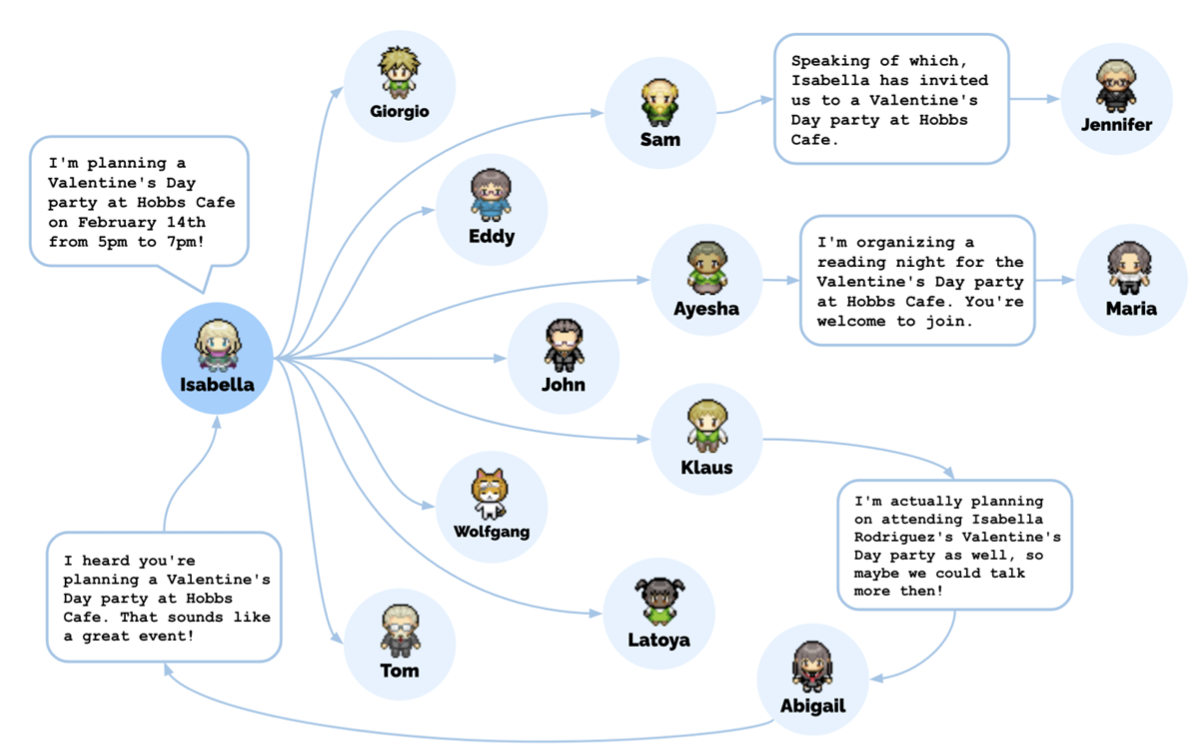

One of many brokers, Isabella, invited among the different brokers to a Valentine’s Day social gathering, as an illustration. As phrase of the social gathering unfold, new acquaintances had been made, dates had been arrange, and finally the invitees arrived at Isabella’s place on the right time. Not precisely riveting stuff, however all that habits started as one “user-specified notion” that Isabella needed to throw a Valentine’s Day social gathering. The exercise that emerged occurred between the massive language mannequin, agent structure, and an “interactive sandbox setting” impressed by The Sims. Giving Isabella a distinct notion, like that she needed to punch everybody within the city, would’ve led to a wholly completely different sequence of behaviors.

Together with different simulation functions, the researchers suppose their mannequin might be used to “underpin non-playable recreation characters that may navigate advanced human relationships in an open world.”

The undertaking jogs my memory a little bit of Maxis’ doomed 2013 SimCity reboot, which promised to simulate a metropolis right down to its particular person inhabitants with hundreds of crude little brokers that drove to and from work and frolicked at parks. A model of SimCity that used these way more superior generative brokers can be enormously advanced, and never attainable in a videogame proper now when it comes to computational price. However Park would not suppose it is far-fetched to think about a future recreation working at that degree.

The total paper, titled “Generative Brokers: Interactive Simulacra of Human Habits,” is on the market right here (opens in new tab), and likewise catalogs flaws of the undertaking—the brokers have a behavior of embellishing, for instance—and moral issues.

Under is a dialog I had with Park concerning the undertaking final week. It has been edited for size and readability.

PC Gamer: We’re clearly excited by your undertaking because it pertains to recreation design. However what led you to this analysis—was it video games, or one thing else?

Joon Sung Park: There’s type of two angles on this. One is that this concept of making brokers that exhibit actually plausible habits has been one thing that our discipline has dreamed about for a very long time, and it is one thing that we type of forgot about, as a result of we realized it is too troublesome, that we did not have the precise ingredient that might make it work.

Can we create NPC brokers that behave in a sensible method? And which have long-term coherence?

Joon Sung Park

What we acknowledged when the massive language mannequin got here out, like GPT-3 a couple of years again, and now ChatGPT and GPT-4, is that these fashions which might be skilled on uncooked information from the social internet, Wikipedia, and principally the web, have of their coaching information a lot about how we behave, how we speak to one another, and the way we do issues, that if we poke them on the proper angle, we will truly retrieve that info and generate plausible habits. Or principally, they grow to be the type of basic blocks for constructing these sorts of brokers.

So we tried to think about, ‘What’s the most excessive, on the market factor that we may presumably do with that concept?’ And our reply got here out to be, ‘Can we create NPC brokers that behave in a sensible method? And which have long-term coherence?’ That was the final piece that we undoubtedly needed in there in order that we may truly speak to those brokers and so they keep in mind one another.

One other angle is that I feel my advisor enjoys gaming, and I loved gaming after I was youthful—so this was all the time type of like our childhood dream to some extent, and we had been to provide it a shot.

I do know you set the ball rolling on sure interactions that you just needed to see occur in your simulation—just like the social gathering invites—however did any behaviors emerge that you just did not foresee?

There’s some delicate issues in there that we did not foresee. We did not count on Maria to ask Klaus out. That was type of a enjoyable factor to see when it truly occurred. We knew that Maria had a crush on Klaus, however there was no assure that a variety of these items would truly occur. And principally seeing that occur, your entire factor was type of surprising.

Looking back, even the truth that they determined to have the social gathering. So, we knew that Isabella can be there, however the truth that different brokers wouldn’t solely hear about it, however truly determine to return and plan their day round it—we hoped that one thing like which may occur, however when it did occur, that type of stunned us.

It is robust to speak about these items with out utilizing anthropomorphic phrases, proper? We are saying the bots “made plans” or “understood one another.” How a lot sense does it make to speak like that?

Proper. There is a cautious line that we’re making an attempt to stroll right here. My background and my crew’s background is the educational discipline. We’re students on this discipline, and we view our function as to be as grounded as we could be. And we’re extraordinarily cautious about anthropomorphizing these brokers or any type of computational brokers generally. So after we say these brokers “plan” and “mirror,” we point out this extra within the sense {that a} Disney character is planning to attend a celebration, proper? As a result of we will say “Mickey Mouse is planning a tea social gathering” with a transparent understanding that Mickey Mouse is a fictional character, an animated character, and nothing past that. And after we say these brokers “plan,” we imply it in that sense, and fewer than there’s truly one thing deeper happening. So you’ll be able to principally think about these caricatures of our lives. That is what it is meant to be.

There is a distinction between the habits that’s popping out of the language mannequin, after which habits that’s coming from one thing the agent “skilled” on the earth they inhabit, proper? When the brokers speak to one another, they may say “I slept effectively final night time,” however they did not. They are not referring to something, simply mimicking what an individual would possibly say in that scenario. So it looks as if the best objective is that these brokers are in a position to reference issues that “truly” occurred to them within the recreation world. You have used the phrase “coherence.”

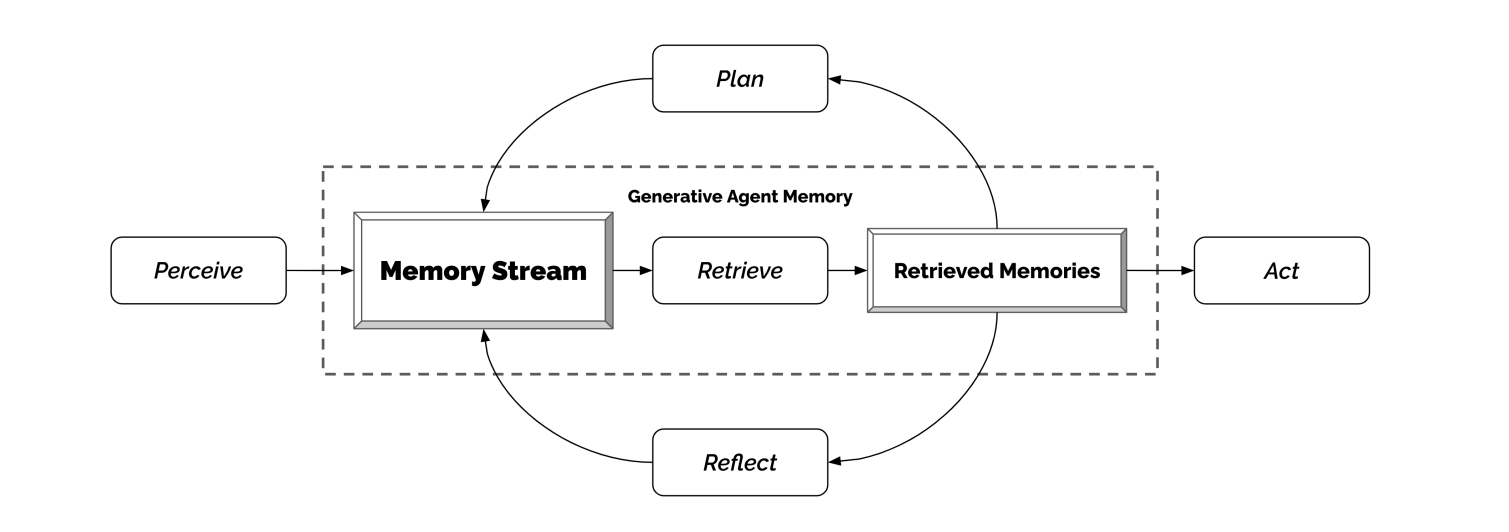

That is precisely proper. The primary problem for an interactive agent, the primary scientific contribution that we’re making with this, is this concept. The primary problem that we try to beat is that these brokers understand an unimaginable quantity of their expertise of the sport world. So for those who open up any of the state particulars and see all of the issues they observe, and all of the issues they “take into consideration,” it is lots. When you had been to feed every little thing to a big language mannequin, even as we speak with GPT-4 with a extremely giant context window, you’ll be able to’t even slot in half a day in that context window. And with ChatGPT, not even, I might say, an hour value of content material.

So, it is advisable to be extraordinarily cautious about what you feed into your language mannequin. It is advisable to deliver down the context into the important thing highlights which might be going to tell the agent within the second the very best. After which use that to feed into a big language mannequin. In order that’s the primary contribution we’re making an attempt to make with this work.

What sort of context information are the brokers perceiving within the recreation world? Greater than their location and dialog with different NPCs? I am stunned by the quantity of information you are speaking about right here.

So, the notion these brokers have is designed fairly merely: it is principally their imaginative and prescient. To allow them to understand every little thing inside a sure radius, and every agent, together with themselves, in order that they make a variety of self-observation as effectively. So, as an instance if there is a Joon Park agent, then I might be not solely observing Tyler on the opposite facet of the display screen, however I might even be observing Joon Park speaking to Tyler. So there’s a variety of self-observation, remark of different brokers, and the area additionally has states the agent observes.

A whole lot of the states are literally fairly easy. So as an instance there is a cup. The cup is on the desk. These brokers will simply say, ‘Oh, the cup is simply idle.’ That is the time period that we use to imply ‘it is doing nothing.’ However all of these states will go into their reminiscences. And there is a variety of issues within the setting, it is fairly a wealthy setting that these brokers have. So all that goes into their reminiscence.

So think about for those who or I had been generative brokers proper now. I need not keep in mind what I ate final Tuesday for breakfast. That is doubtless irrelevant to this dialog. However what may be related is the paper I wrote on generative brokers. So that should get retrieved. In order that’s the important thing perform of generative brokers: Of all this info that they’ve, what’s probably the most related one? And the way can they speak about that?

Relating to the concept that these might be future videogame NPCs, would you say that any of them behaved with a definite character? Or did all of them type of communicate and act in roughly the identical method?

There’s type of two solutions to this. They had been designed to be very distinct characters. And every of them had completely different experiences on this world, as a result of they talked to completely different folks. In case you are with a household, the folks you doubtless speak to most is your loved ones. And that is what you see in these brokers, and that influenced their future habits.

Will we need to create fashions that may generate unhealthy content material, poisonous content material, for plausible simulation?

Joon Sung Park

So, they begin with distinct identities. We give them some character description, in addition to their occupation and present relationship firstly. And that enter that principally bootstraps their reminiscence, and influences their future habits. And their future habits influences extra future habits. So these brokers, what they keep in mind and what they expertise is very distinct, and so they make selections based mostly on what they expertise. So that they find yourself behaving very in a different way.

I assume on the easiest degree: for those who’re a trainer, you go to high school, for those who’re a pharmacy clerk, you go to the pharmacy. However it is also the way in which you speak to one another, what you speak about, all these modifications based mostly on how these brokers are outlined and what they expertise.

Now, there are the boundary circumstances or type of limitations with our present method, which makes use of ChatGPT. ChatGPT was fantastic tuned on human preferences. And OpenAI has finished a variety of onerous work to make these brokers be prosocial, and never poisonous. And partially, that is as a result of ChatGPT and generative brokers have a distinct objective. ChatGPT is making an attempt to grow to be actually a useful gizmo that’s for those who minimizes the chance as a lot as attainable. So that they’re actively making an attempt to make this mannequin not do sure issues. Whereas for those who’re making an attempt to create this concept of believability, people do have battle, and we now have arguments, and people are part of our plausible expertise. So you’ll need these in there. And that’s much less represented in generative brokers as we speak, as a result of we’re utilizing the underlying mannequin, ChatGPT. So a variety of these brokers come out to be very well mannered, very collaborative, which in some circumstances is plausible, however it could go a bit bit too far.

Do you anticipate a future the place we now have bots skilled on every kind of various language units? Ignoring for now the issue of gathering coaching information or licensing it, would you think about, say, a mannequin based mostly on cleaning soap opera dialogue, or different issues with extra battle?

There is a little bit of a coverage angle to this, and type of what we, as a society and neighborhood determine is the precise factor to do right here is. From the technical angle, sure, I feel we’ll have the flexibility to coach these fashions extra shortly. And we already are seeing folks or smaller teams aside from OpenAI, with the ability to replicate these giant fashions to a shocking diploma. So we could have I feel, to some extent, that capability.

Now, will we truly do this or determine as a society that it is a good suggestion or not? I feel it’s kind of of an open query. In the end, as lecturers—and I feel this isn’t only for this undertaking, however any type of scientific contribution that we make—the upper the affect, the extra we care about its factors of failures and dangers. And our common philosophy right here is establish these dangers, be very clear about them, and suggest construction and rules that may assist us mitigate these dangers.

I feel that is a dialog that we have to begin having with a variety of these fashions. And we’re already having these conversations, however the place they will land, I feel it’s kind of of an open query. Will we need to create fashions that may generate unhealthy content material, poisonous content material, for plausible simulation? In some circumstances, the profit might outweigh the potential harms. In some circumstances, it might not. And that is a dialog that I am actually engaged with proper now with my colleagues, but in addition it is not essentially a dialog that anybody researcher needs to be deciding on.

Considered one of your moral concerns on the finish of the paper was the query of what to do about folks growing parasocial relationships with chatbots, and we have truly already reported on an occasion of that. In some circumstances it seems like our predominant reference level for that is already science fiction. Are issues shifting sooner than you’ll have anticipated?

Issues are altering in a short time, even for these within the discipline. I feel that half is completely true. We’re hopeful that a variety of the actually vital moral discussions we will have, and at the least begin to have some tough rules round tips on how to cope with these issues. However no, it’s shifting quick.

It’s fascinating that we finally determined to refer again to science fiction films to essentially speak about a few of these moral issues. There was an fascinating second, and possibly this does illustrate this level a bit bit: we felt strongly that we would have liked an moral portion within the paper, like what are the dangers and whatnot, however as we had been desirous about that, however the issues that we first noticed was simply not one thing that we actually talked about in educational neighborhood at that time. So there wasn’t any literature per se that we may refer again to. In order that’s after we determined, you recognize, we’d simply have to take a look at science fiction and see what they do. That is the place these sorts of issues bought referred to.

And I feel I feel you are proper. I feel that we’re attending to that time quick sufficient that we are actually relying to some extent on the creativity of those fiction writers. Within the discipline of human pc interplay, there may be truly what’s referred to as a “generative fiction.” So there are literally folks engaged on fiction for the aim of foreseeing potential risks. So it is one thing that we respect. We’re shifting quick. And we’re very a lot wanting to suppose deeply about these questions.

You talked about the following 5 to 10 years there. Folks have been engaged on machine studying for some time now, however once more, from the lay perspective at the least, it looks as if we’re abruptly being confronted with a burst of development. Is that this going to decelerate, or is it a rocket ship?

What I feel is fascinating concerning the present period is, even those that are closely concerned within the growth of those items of know-how usually are not so clear on what the reply to your query is. So, I am saying that is truly fairly fascinating. As a result of for those who look again, as an instance, 40 or 50 years, or we’re after we’re constructing transistors for the primary few many years, and even as we speak, we even have a really clear eye on how briskly issues will progress. We’ve got Moore’s Regulation, or we now have a sure understanding that, at each occasion, that is how briskly issues will advance.

I feel within the paper, we talked about various like 1,000,000 brokers. I feel we will get there.

Joon Sung Park

What is exclusive about what we’re seeing as we speak, I feel, is that a variety of the behaviors or capacities of AI programs are emergent, which is to say, after we first began constructing them, we simply did not suppose that these fashions or programs would do this, however we later discover that they can achieve this. And that’s making it a bit bit tougher, even for the scientific neighborhood, to essentially have a transparent prediction on what the following 5 years goes to seem like. So my trustworthy reply is, I am unsure.

Now, there are particular issues that we will say. And that usually is inside the scope of what I might say are optimization and efficiency. So, working 25 brokers as we speak took a good quantity of sources and time. It isn’t a very low cost simulation to run even at that scale. What I can say is, I feel inside a 12 months, there are going to be some, maybe video games or functions, which might be impressed by candidate brokers. In two to 3 years, there may be some functions that make a severe try at creating one thing like generative brokers in a extra industrial sense. I feel in 5 to 10 years, it is going to be a lot simpler to create these sorts of functions. Whereas as we speak, on day one, even inside a scope of 1 or two years, I feel it is going to be a stretch to get there.

Now, within the subsequent 30 years, I feel it may be attainable that computation will likely be low cost sufficient that we will create an agent society with greater than 25 brokers. I feel within the paper, we talked about various like 1,000,000 brokers. I feel we will get there, and I feel these predictions are barely simpler for a pc scientist to make, as a result of it has extra to do with the computational energy. So these are the issues that I feel I can say for now. However when it comes to what AI will do? Laborious to say.