As Roblox has grown over the previous 16+ years, so has the dimensions and complexity of the technical infrastructure that helps thousands and thousands of immersive 3D co-experiences. The variety of machines we help has greater than tripled over the previous two years, from roughly 36,000 as of June 30, 2021 to almost 145,000 as we speak. Supporting these always-on experiences for individuals everywhere in the world requires greater than 1,000 inner companies. To assist us management prices and community latency, we deploy and handle these machines as a part of a custom-built and hybrid personal cloud infrastructure that runs totally on premises.

Our infrastructure at present helps greater than 70 million each day energetic customers all over the world, together with the creators who depend on Roblox’s financial system for his or her companies. All of those thousands and thousands of individuals count on a really excessive degree of reliability. Given the immersive nature of our experiences, there’s an especially low tolerance for lags or latency, not to mention outages. Roblox is a platform for communication and connection, the place individuals come collectively in immersive 3D experiences. When individuals are speaking as their avatars in an immersive house, even minor delays or glitches are extra noticeable than they’re on a textual content thread or a convention name.

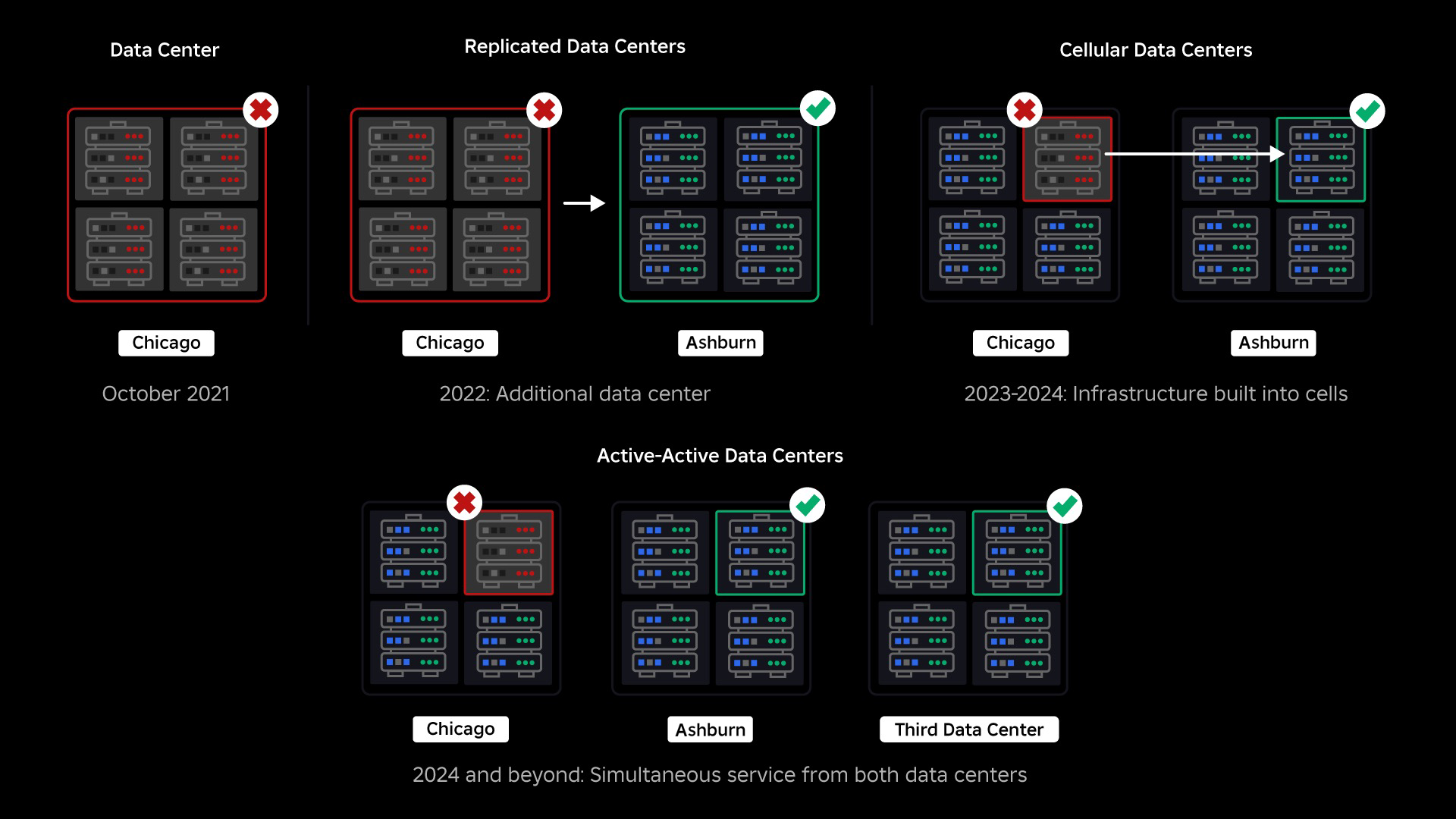

In October, 2021, we skilled a system-wide outage. It began small, with a problem in a single element in a single information middle. But it surely unfold rapidly as we have been investigating and finally resulted in a 73-hour outage. On the time, we shared each particulars about what occurred and a few of our early learnings from the difficulty. Since then, we’ve been finding out these learnings and dealing to extend the resilience of our infrastructure to the forms of failures that happen in all large-scale techniques because of elements like excessive site visitors spikes, climate, {hardware} failure, software program bugs, or simply people making errors. When these failures happen, how will we make sure that a problem in a single element, or group of parts, doesn’t unfold to the total system? This query has been our focus for the previous two years and whereas the work is ongoing, what we’ve performed up to now is already paying off. For instance, within the first half of 2023, we saved 125 million engagement hours monthly in comparison with the primary half of 2022. At present, we’re sharing the work we’ve already performed, in addition to our longer-term imaginative and prescient for constructing a extra resilient infrastructure system.

Constructing a Backstop

Inside large-scale infrastructure techniques, small scale failures occur many occasions a day. If one machine has a problem and must be taken out of service, that’s manageable as a result of most corporations preserve a number of situations of their back-end companies. So when a single occasion fails, others decide up the workload. To handle these frequent failures, requests are typically set to routinely retry in the event that they get an error.

This turns into difficult when a system or individual retries too aggressively, which might turn out to be a method for these small-scale failures to propagate all through the infrastructure to different companies and techniques. If the community or a consumer retries persistently sufficient, it should finally overload each occasion of that service, and probably different techniques, globally. Our 2021 outage was the results of one thing that’s pretty frequent in massive scale techniques: A failure begins small then propagates by means of the system, getting massive so rapidly it’s onerous to resolve at the beginning goes down.

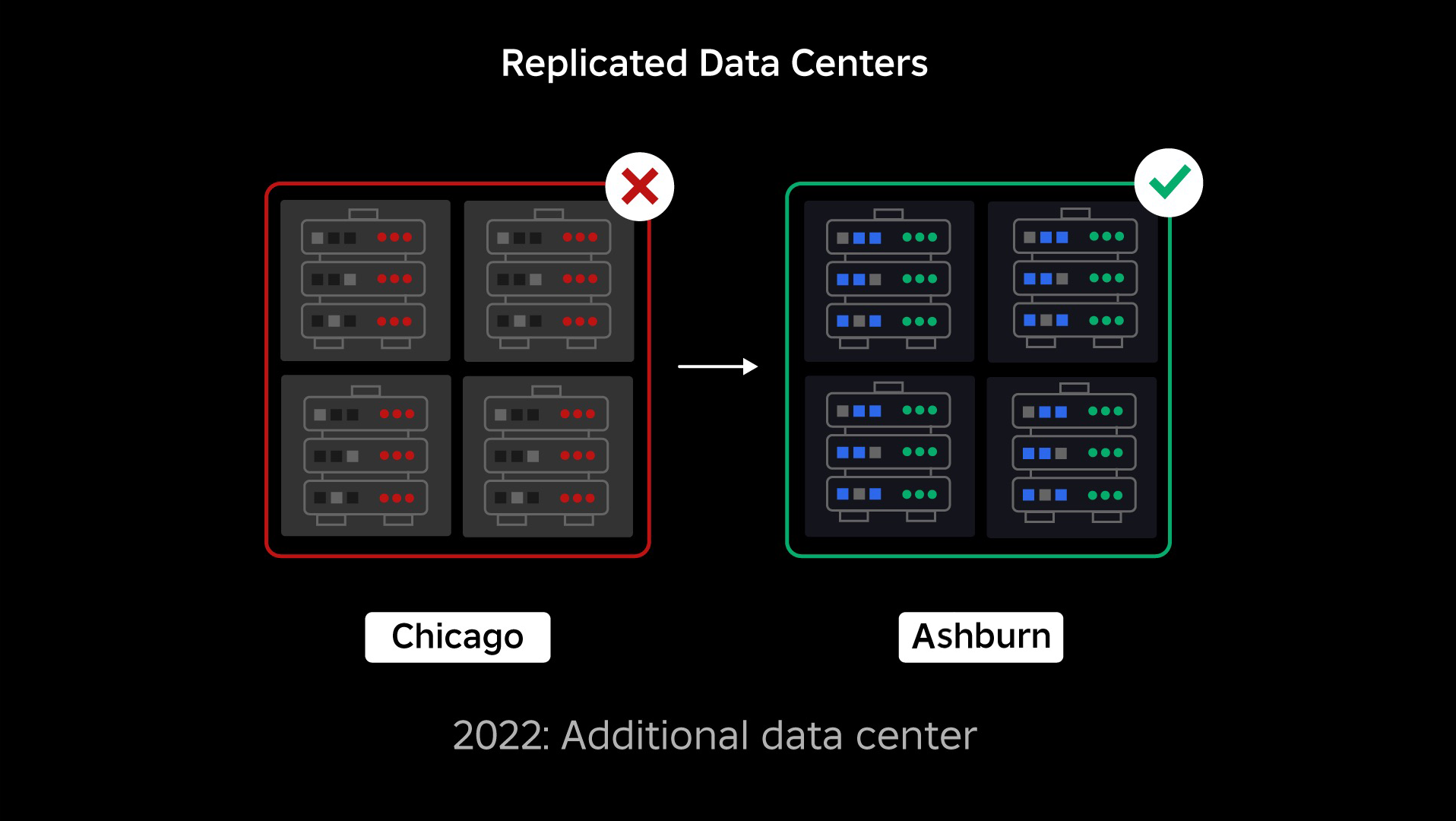

On the time of our outage, we had one energetic information middle (with parts inside it appearing as backup). We wanted the flexibility to fail over manually to a brand new information middle when a problem introduced the prevailing one down. Our first precedence was to make sure we had a backup deployment of Roblox, so we constructed that backup in a brand new information middle, situated in a special geographic area. That added safety for the worst-case state of affairs: an outage spreading to sufficient parts inside a knowledge middle that it turns into completely inoperable. We now have one information middle dealing with workloads (energetic) and one on standby, serving as backup (passive). Our long-term objective is to maneuver from this active-passive configuration to an active-active configuration, through which each information facilities deal with workloads, with a load balancer distributing requests between them primarily based on latency, capability, and well being. As soon as that is in place, we count on to have even greater reliability for all of Roblox and be capable of fail over almost instantaneously quite than over a number of hours.

Transferring to a Mobile Infrastructure

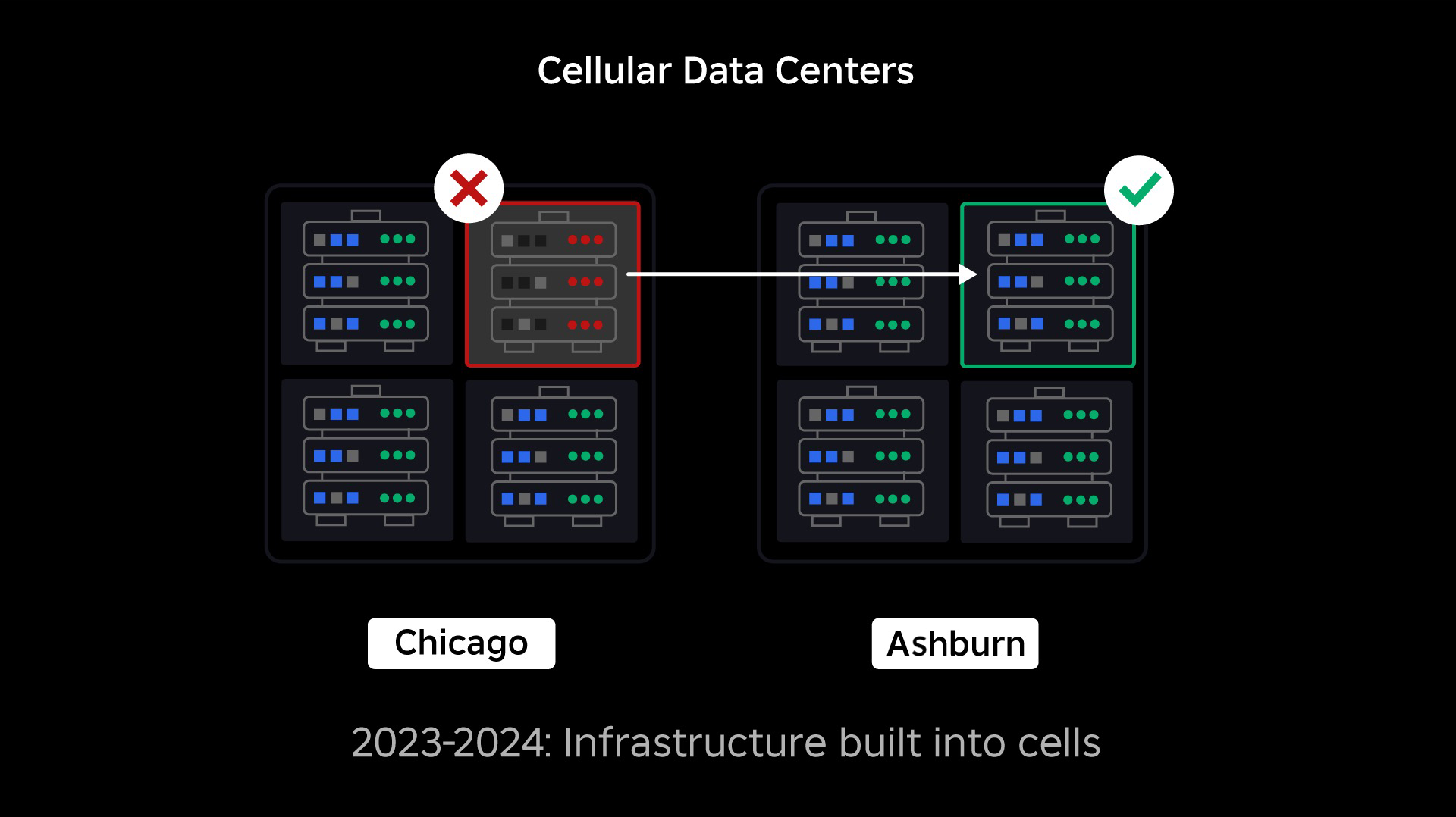

Our subsequent precedence was to create sturdy blast partitions inside every information middle to scale back the potential of a complete information middle failing. Cells (some corporations name them clusters) are basically a set of machines and are how we’re creating these partitions. We replicate companies each inside and throughout cells for added redundancy. Finally, we wish all companies at Roblox to run in cells to allow them to profit from each sturdy blast partitions and redundancy. If a cell is now not useful, it might safely be deactivated. Replication throughout cells allows the service to maintain working whereas the cell is repaired. In some circumstances, cell restore would possibly imply a whole reprovisioning of the cell. Throughout the trade, wiping and reprovisioning a person machine, or a small set of machines, is pretty frequent, however doing this for a complete cell, which comprises ~1,400 machines, shouldn’t be.

For this to work, these cells should be largely uniform, so we will rapidly and effectively transfer workloads from one cell to a different. Now we have set sure necessities that companies want to fulfill earlier than they run in a cell. For instance, companies should be containerized, which makes them rather more moveable and prevents anybody from making configuration modifications on the OS degree. We’ve adopted an infrastructure-as-code philosophy for cells: In our supply code repository, we embrace the definition of every part that’s in a cell so we will rebuild it rapidly from scratch utilizing automated instruments.

Not all companies at present meet these necessities, so we’ve labored to assist service house owners meet them the place attainable, and we’ve constructed new instruments to make it straightforward emigrate companies into cells when prepared. For instance, our new deployment software routinely “stripes” a service deployment throughout cells, so service house owners don’t have to consider the replication technique. This degree of rigor makes the migration course of rather more difficult and time consuming, however the long-term payoff might be a system the place:

- It’s far simpler to comprise a failure and stop it from spreading to different cells;

- Our infrastructure engineers could be extra environment friendly and transfer extra rapidly; and

- The engineers who construct the product-level companies which can be finally deployed in cells don’t have to know or fear about which cells their companies are working in.

Fixing Greater Challenges

Much like the best way fireplace doorways are used to comprise flames, cells act as sturdy blast partitions inside our infrastructure to assist comprise no matter challenge is triggering a failure inside a single cell. Finally, all the companies that make up Roblox might be redundantly deployed inside and throughout cells. As soon as this work is full, points might nonetheless propagate large sufficient to make a complete cell inoperable, however it will be extraordinarily troublesome for a problem to propagate past that cell. And if we achieve making cells interchangeable, restoration might be considerably quicker as a result of we’ll be capable of fail over to a special cell and preserve the difficulty from impacting finish customers.

The place this will get difficult is separating these cells sufficient to scale back the chance to propagate errors, whereas protecting issues performant and useful. In a fancy infrastructure system, companies want to speak with one another to share queries, info, workloads, and so on. As we replicate these companies into cells, we should be considerate about how we handle cross-communication. In a really perfect world, we redirect site visitors from one unhealthy cell to different wholesome cells. However how will we handle a “question of demise”—one which’s inflicting a cell to be unhealthy? If we redirect that question to a different cell, it might trigger that cell to turn out to be unhealthy in simply the best way we’re making an attempt to keep away from. We have to discover mechanisms to shift “good” site visitors from unhealthy cells whereas detecting and squelching the site visitors that’s inflicting cells to turn out to be unhealthy.

Within the quick time period, we now have deployed copies of computing companies to every compute cell so that the majority requests to the information middle could be served by a single cell. We’re additionally load balancing site visitors throughout cells. Wanting additional out, we’ve begun constructing a next-generation service discovery course of that might be leveraged by a service mesh, which we hope to finish in 2024. It will enable us to implement subtle insurance policies that can enable cross-cell communication solely when it received’t negatively influence the failover cells. Additionally coming in 2024 might be a way for guiding dependent requests to a service model in the identical cell, which is able to reduce cross-cell site visitors and thereby cut back the danger of cross-cell propagation of failures.

At peak, greater than 70 % of our back-end service site visitors is being served out of cells and we’ve discovered so much about create cells, however we anticipate extra analysis and testing as we proceed emigrate our companies by means of 2024 and past. As we progress, these blast partitions will turn out to be more and more stronger.

Migrating an always-on infrastructure

Roblox is a worldwide platform supporting customers everywhere in the world, so we will’t transfer companies throughout off-peak or “down time,” which additional complicates the method of migrating all of our machines into cells and our companies to run in these cells. Now we have thousands and thousands of always-on experiences that have to proceed to be supported, whilst we transfer the machines they run on and the companies that help them. After we began this course of, we didn’t have tens of hundreds of machines simply sitting round unused and obtainable emigrate these workloads onto.

We did, nevertheless, have a small variety of extra machines that have been bought in anticipation of future progress. To start out, we constructed new cells utilizing these machines, then migrated workloads to them. We worth effectivity in addition to reliability, so quite than going out and shopping for extra machines as soon as we ran out of “spare” machines we constructed extra cells by wiping and reprovisioning the machines we’d migrated off of. We then migrated workloads onto these reprovisioned machines, and began the method once more. This course of is advanced—as machines are changed and free as much as be constructed into cells, they don’t seem to be liberating up in a really perfect, orderly vogue. They’re bodily fragmented throughout information halls, leaving us to provision them in a piecemeal vogue, which requires a hardware-level defragmentation course of to maintain the {hardware} areas aligned with large-scale bodily failure domains.

A portion of our infrastructure engineering crew is concentrated on migrating present workloads from our legacy, or “pre-cell,” surroundings into cells. This work will proceed till we’ve migrated hundreds of various infrastructure companies and hundreds of back-end companies into newly constructed cells. We count on this can take all of subsequent 12 months and probably into 2025, because of some complicating elements. First, this work requires strong tooling to be constructed. For instance, we want tooling to routinely rebalance massive numbers of companies after we deploy a brand new cell—with out impacting our customers. We’ve additionally seen companies that have been constructed with assumptions about our infrastructure. We have to revise these companies so they don’t rely on issues that would change sooner or later as we transfer into cells. We’ve additionally carried out each a solution to seek for identified design patterns that received’t work properly with mobile structure, in addition to a methodical testing course of for every service that’s migrated. These processes assist us head off any user-facing points attributable to a service being incompatible with cells.

At present, near 30,000 machines are being managed by cells. It’s solely a fraction of our whole fleet, but it surely’s been a really clean transition up to now with no destructive participant influence. Our final objective is for our techniques to attain 99.99 % consumer uptime each month, which means we’d disrupt not more than 0.01 % of engagement hours. Trade-wide, downtime can’t be utterly eradicated, however our objective is to scale back any Roblox downtime to a level that it’s almost unnoticeable.

Future-proofing as we scale

Whereas our early efforts are proving profitable, our work on cells is way from performed. As Roblox continues to scale, we’ll preserve working to enhance the effectivity and resiliency of our techniques by means of this and different applied sciences. As we go, the platform will turn out to be more and more resilient to points, and any points that happen ought to turn out to be progressively much less seen and disruptive to the individuals on our platform.

In abstract, thus far, we now have:

- Constructed a second information middle and efficiently achieved energetic/passive standing.

- Created cells in our energetic and passive information facilities and efficiently migrated greater than 70 % of our back-end service site visitors to those cells.

- Set in place the necessities and finest practices we’ll have to observe to maintain all cells uniform as we proceed emigrate the remainder of our infrastructure.

- Kicked off a steady strategy of constructing stronger “blast partitions” between cells.

As these cells turn out to be extra interchangeable, there might be much less crosstalk between cells. This unlocks some very attention-grabbing alternatives for us when it comes to rising automation round monitoring, troubleshooting, and even shifting workloads routinely.

In September we additionally began working energetic/energetic experiments throughout our information facilities. That is one other mechanism we’re testing to enhance reliability and reduce failover occasions. These experiments helped establish various system design patterns, largely round information entry, that we have to rework as we push towards turning into absolutely active-active. Total, the experiment was profitable sufficient to depart it working for the site visitors from a restricted variety of our customers.

We’re excited to maintain driving this work ahead to convey better effectivity and resiliency to the platform. This work on cells and active-active infrastructure, together with our different efforts, will make it attainable for us to develop right into a dependable, excessive performing utility for thousands and thousands of individuals and to proceed to scale as we work to attach a billion individuals in actual time.